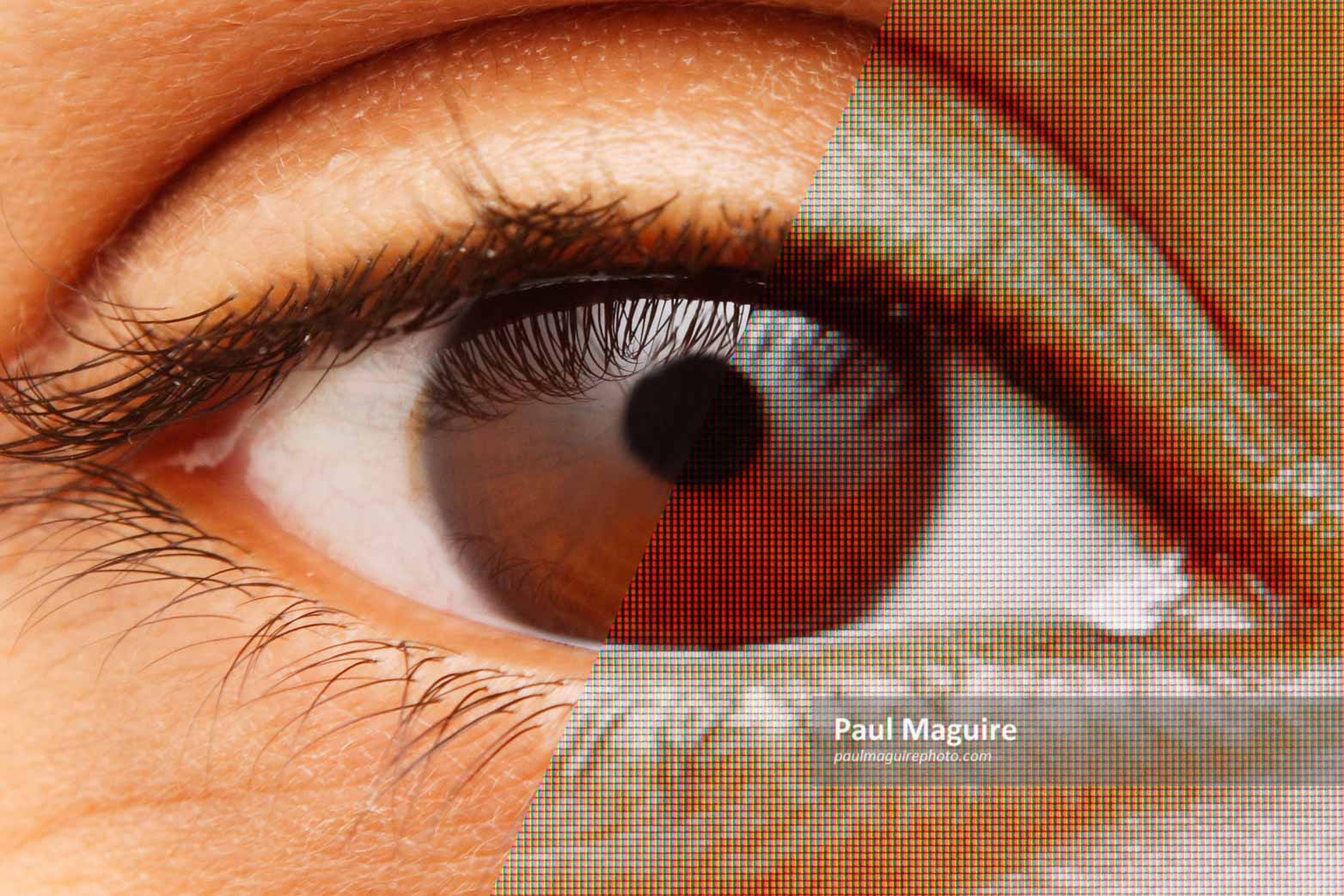

Just as we’re getting used to the idea of watching (and shooting) 4K video, now manufacturers are trying to convince us that 4K resolution just isn’t enough. It turns out that 4K is only Ultra High Definition (UHD), and pretty soon we are going to need Full Ultra High Definition, or 8K. So is 8K really the next big thing, or are we being sold a dud? Time to investigate.

The high resolution “arms race”

Around 2010, we first started to see a new breed of LED screens appear on the market. Apple launched its “retina” displays, with a resolution “to match the resolving power of the human eye”. Other manufacturers introduced similar technology as they each tried to outpace one another. By 2012, 4K TV’s were starting to appear. This new standard, called Ultra-High Definition (UHD), offered a staggering four times the resolution of Full HD TV’s (or 1080p). The resolution of 1080p was already outstanding, so 4K was a collosal leap. Even now, videographers are struggling to upgrade their camera equipment and workflow to be able to record 4K. This means upgrading to ultra-fast broadband connections, purchasing bigger disk drives and faster memory cards, and a more powerful computer to handle it all.

Now 8K, or “Full” UHD, is just around the corner. Electronics giant Sharp have announced that a new “prosumer” camera will shoot 8K, and several TV’s are already on the market, albeit with ludicrous price tags. 8K offers four times the resolution of 4K for the ultimate in clarity and realism. Sound familiar? It should be, because that’s exactly what 4K was sold on.

Reality check: a critical look at 4K

So has 4K really brought the benefit that it promised? The truth is, if you’re watching an average-sized TV from the comfort of a sofa, then it’s likely that the visible difference between 4K and 1080p is minimal, even non-existent. That’s because 4K was invented for the cinema, or home cinema, experience. For an average TV in people’s homes, 1080p is already plenty enough resolution, and the enhanced resolution of 4K is mostly lost to the human eye. That’s not to say 4K doesn’t have its place: for a big screen experience, or for close work on a 32-inch computer monitor, the difference in picture quality between 1080p and 4K can be dramatic. That’s exactly what 4K was designed for. But for any other situation it is usually overkill.

So where does 8K fit in if the benefits of 4K were already marginal? Is it just a cynical attempt by manufacturers to get us to update our TV sets and camera equipment, yet again? Or will it usher in a new age of stunning image quality? To answer that question properly, we have to delve a little deeper into the world of pixel density and visual acuity.

Why does resolution matter? Here’s the facts

The ultimate goal of these incredibly high resolution screens is to match the resolving limits of the human eye. This limit is called visual acuity, when the human eye can no longer discern between two points. Visual acuity is measured not in pixels, but in the resolving angle. According to Michael F Deering of Sun Systems, a typical visual acuity is 0.47 arc minutes, or 0.0078 degrees. Our actual “visual resolution” is less than this, at around 1 arc minute, or 0.0167 degrees.

Armed with the above knowledge, plus a dash of trigonometry, we can arrive at a simple formula to relate screen resolution to both viewing distance and the screen size.

For a standard 16:9 widescreen, we arrive at a simple formula:

Screen resolution (rows of pixels) = 140 x screen diagonal (inches) / viewing distance (feet)

Now here’s a list of the most common screen resolutions:

| Number of pixels per row | Number of rows of pixels | |

| Standard Definition (SD) | 720 | 480 |

| Standard HD | 1280 | 720 |

| Full HD | 1920 | 1080 |

| Quad HD | 2560 | 1440 |

| Ultra HD (4K) | 3840 | 2160 |

| Full UHD (8K) | 7680 | 4320 |

So now let’s apply this to some real word examples…

Below is a table of screen sizes at their recommended viewing distances*. The results may surprise you:

| Screen size | Ideal screen resolution (and standard required) |

| 15 inch laptop viewed at 18 inches | 1400 (Quad HD) |

| 24 inch desktop monitor viewed at 2 feet | 1680 (4K) |

| 32 inch desktop monitor viewed at 2 feet | 2240 (4K) |

| 32 inch TV viewed at 3.6 feet | 1244 (Quad HD) |

| 43 inch TV viewed at 4.8 feet | 1254 (Quad HD) |

| 65 inch TV viewed at 7.3 feet | 1247 (Quad HD) |

All of these examples suggest that the ideal resolution falls in the range between Quad HD and 4K. And interestingly, this shows that 4K is already overkill for normal TV viewing. To gain the full benefit you would have to sit much closer than the minimum distance recommended by THX. In fact, I’m willing to bet that for most real world situations, Full HD is already enough resolution. Check it yourself:

| Screen size (inches) | Full HD viewing distance (feet) (Minimum distance to see all detail) | 4K viewing distance (feet) (Minimum distance to see all detail) |

| 32 | 4.1 | 2.1 |

| 39 | 5.1 | 2.5 |

| 43 | 5.6 | 2.8 |

| 55 | 7.1 | 3.6 |

| 65 | 8.4 | 4.2 |

| 82 | 10.6 | 5.3 |

In other words, watching a 55 inch TV, you would have to sit less than 7 feet away to really see any difference between Full HD and 4K.

More importantly, you would have to sit no more than about 3 feet away to see a resolution greater than 4K.

Where does that leave 8K?

If 4K is already more than enough resolution, where does 8K fit in? The short answer is that it doesn’t.

8K was really invented for immersive environments, such as wrap-around screens, planetariums, and virtual reality. (Basically, anything with a viewing angle over 60 degrees.) Sure, you can always sit really close to a large flat screen to get a more “immersive” experience, but what you gain from immersion you will lose from being at a distorted viewpoint. Flat screens were simply not designed to be viewed like this, nor was the content that we’re watching.

So in summary, 8K really is a step too far for your average flat screen TV – even a really big one. It’s meant for immersive environments and manufacturers are really being unfair to push 8K as the future of normal TV viewing.

What about recording video in 8K?

As of July 2020, Canon has launched its 8K monster, the Canon EOS R5. (I’m on the waiting list for one, although my main interest is shooting stills.)

Canon recognise that 8K is a niche market, and are promoting it not as an end consumer product, but as a means to capture raw footage.

Shooting in 8K opens up a world of editing options. You can crop, apply zoom, stabilisation and tracking, all in post production, and still produce a 4K end product.

The verdict

After close inspection, it’s safe to say that there really is very little need for 8K in the mainstream market. We have already reached the very real limits of human perception with 4K. It was designed that way. So if you are thinking about going out and buying an 8K TV next year to impress your friends, then there really are better ways to waste your money.

There is a real future, however, for high-end 8K cameras. If you plan on shooting in 8K to edit and produce better 4K footage, then yes. But not to make “better quality” 8K home videos straight out of the camera. Please, just don’t do it.

About the author

Paul Maguire is a professional photographer with a science background. He has a bachelors degree in Physics from Bath University and a masters degree in Exploration Geophysics from Imperial College, London.